🟢

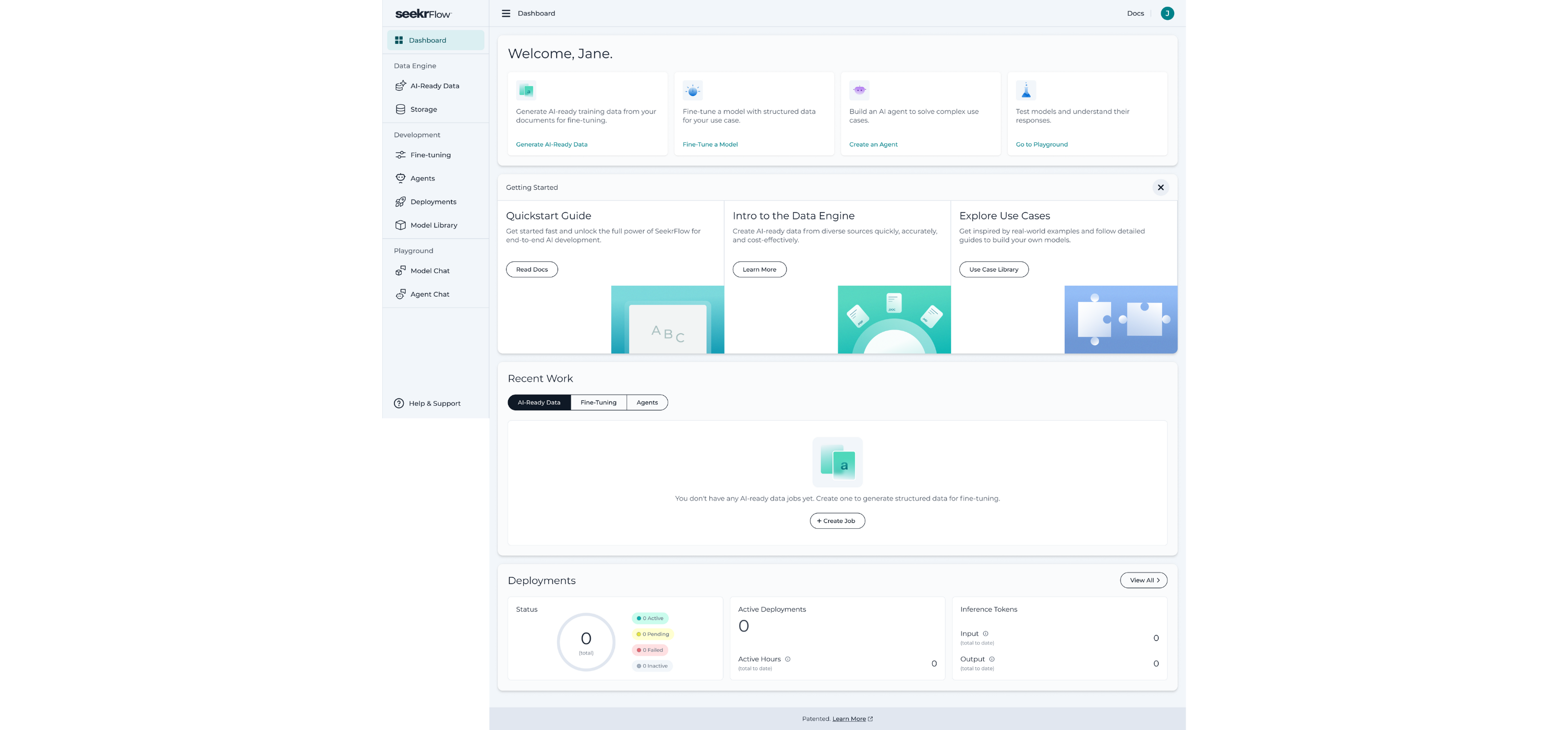

We’ve introduced Group Relative Policy Optimization (GRPO) Fine-Tuning in SeekrFlow, a powerful reinforcement learning technique that unlocks advanced reasoning capabilities in Large Language Models (LLMs). GRPO fine-tuning transforms models from passive information retrievers into active problem-solvers, capable of handling complex, verifiable tasks with greater precision and reliability.

This method is effective in domains where answers can be definitively validated, such as mathematics, coding, and other structured problem-solving scenarios. GRPO training follows a similar process to standard fine-tuning, with a few targeted modifications, and is now fully supported in SeekrFlow.

Read the blog here

View Documentation here

SeekrFlow Agents now support Custom Tools, enabling developers to extend agent capabilities with purpose-built logic tailored to their specific workflows. Custom Tools are developer-defined Python functions that can be seamlessly integrated alongside built-in tools like Web Search or File Search. Agents dynamically reason over tool descriptions, their instructions, and user input to decide when to invoke them to automatically map context to parameters, execute the function, and return results.

Key capabilities:

- Custom business logic: Implement organization-specific workflows and operations.

- Data transformation: Clean, format, and enrich text, numerical, or structured data.

- External integrations: Connect agents to APIs, databases, Slack, Outlook, and other business systems.

- Proprietary data access: Leverage internal knowledge stores and company-specific repositories.

View documentation here

🔵

The SeekrFlow Model Library just got a major expansion—15 new models are now available for use.

This update significantly broadens your model options, offering a mix of small, mid, and large-scale LLMs across multiple providers. Whether you’re experimenting with reasoning, vision tasks, or enterprise-scale workloads, SeekrFlow now gives you more flexibility to choose the right model for the job.

- meta-llama/Llama-3.2-90B-Vision-Instruct

- NousResearch/Yarn-Mistral-7B-128k

- mistralai/Mistral-7B-Instruct-v0.2

- mistralai/Mistral-Small-24B-Instruct-2501

- google/gemma-2b

- google/gemma-2-9b

- google/gemma-3-27b-it

- Qwen/Qwen3-8B-FP8

- Qwen/Qwen3-32B-FP8

- Qwen/Qwen3-30B-A3B-FP8

- Qwen/Qwen3-235B-A22B-FP8

- Qwen/Qwen2-72B

- mistralai/Mamba-Codestral-7B-v0.1

- microsoft/Phi-3-mini-4k-instruct

- meta-llama/Llama-4-Scout-17B-16E

- meta-llama/Llama-4-Scout-17B-16E-Instruct

- meta-llama/Llama-3.2-1B-Vision-Instruct

- meta-llama/Llama-3.2-3B-Vision-Instruct

Where to use them:

- API / SDK: Call the new models directly for inference.

- Playground > Model Chat: Pick any of the new additions and start chatting immediately.

SeekrFlow now includes content moderation models to help teams build safety and scoring pipelines directly into their applications.

- Purpose-built for podcast moderation, using the same scoring system that powered SeekrAlign.

- Includes GARM category classification and the Seekr Civility Score™.

- Ideal for scoring podcast transcripts, detecting attacks, labeling tone, and surfacing ad risk.

Learn more here

- General-purpose moderation model that classifies unsafe content across 22 MLCommons taxonomy categories.

- Best for moderating LLM outputs, chat messages, or any user-generated text.

- With these models, users can design their own safety and scoring apps or pipelines that can:

Learn more here

With these models, users can design their own safety and scoring apps or pipelines that can:

- Detect unsafe or brand-unsafe content.

- Score tone and civility.

- Add guardrails for LLM agents and assistants.

- Apply moderation filters at scale.

This update expands SeekrFlow into media, safety, guardrails, and governance use cases—broadening how teams can ensure responsible, brand-safe, and transparent AI deployments.

View documentation here

You can now configure agent tools directly from the SeekrFlow UI, making it easier to extend agent capabilities.

- File Search: Let agents retrieve and reason over files ingested into SeekrFlow.

- Web Search: Enable agents to pull in fresh information from the web to complement their knowledge base.

This update gives you more control and flexibility in building agents straight from the browser. With built-in File Search and Web Search, teams can quickly prototype, evaluate, and operationalize agents that connect to both internal and external sources.

This release introduces powerful new ingestion and chunking options, giving users more control over speed, accuracy, and structure when preparing data for AI workflows. Available in UI, API, and SDK.

- Highest-fidelity output with exact hierarchy and table preservation.

- Best for long technical docs, contracts, research papers, and compliance data where precision is critical.

- May take more time to process very large files (100+ pages).

- Delivers fast results with strong accuracy—ideal for everyday files, batch runs, or time-sensitive workflows.

- Handles large files efficiently while maintaining usability.

View documentation here

- Fixed-size sliding window with overlap and support for ---DOCUMENT_BREAK--- markers.

- Predictable, user-controlled splits for more consistent chunking.

- Ideal for resumes, multi-doc compilations, unstructured PDFs, narratives, or evaluation workflows.

View documentation here

With these new options, you can adapt ingestion to the needs of each workflow:

- Speed-Optimized mode processes massive batches of files, RFPs, or discovery documents in just minutes, perfect when turnaround time matters most.

- Accuracy-Optimized mode preserves every detail in contracts, compliance records, or research papers, ensuring the cleanest structure and hierarchy extraction.

- Manual Chunking gives you complete control over splitting less structured content, including resumes, multi-document compilations, and narratives. This ensures consistent chunks for vector databases and downstream workflows.

This release includes a series of behind-the-scenes updates to improve overall platform performance, usability, and reliability. From small interface refinements to enhanced error handling and support resources, these updates ensure a smoother and more consistent experience across SeekrFlow.